-

Why AI Delegation Fails the Moment Responsibility Matters

accountability, agent workflows, AI agents, AI delegation, AI Safety, approval workflows, audit logs, Automation, enterprise AI, escalation, governance, human in the loop, operational design, refusal, risk managementAI delegation is having a moment. Agents. Workflows. Autonomous systems. “Let the model handle it.”

In low-stakes work, it can feel like a breakthrough: tasks get handed off, tickets close, drafts appear, and follow-ups get scheduled.But there’s a consistent pattern you’ll see across teams—whether they’re discussing AI agents, prompt pipelines, or even plain old project management handoffs:

Delegation works right up until responsibility matters.

Not in the dramatic “the system went rogue” way. In a quieter, more operational way.

The task looks done. The output exists. The status is green.

And then something goes wrong and the only question that matters lands in the room:Who owned the outcome?

Most of the time, no one can answer. Or worse: three people think they didn’t.

That gap—between “work happened” and “someone owned the result”—is where AI delegation fails.

Not because intelligence is missing, but because ownership is.

The Delegation Illusion: “Task Completed” ≠ “Outcome Owned”

A lot of what we call “AI delegation” today is really just task routing:

- Send a request to a system

- Receive a plausible response

- Assume the work is complete

For reversible work, this is fine. Drafting copy. Summarizing calls. Brainstorming. Extracting bullets from a document.

If the output is mediocre, you regenerate it. If it’s wrong, you ignore it.The illusion breaks when delegation crosses a boundary into consequence:

- An email that commits the company to a promise

- A refund or pricing concession

- A customer-facing decision that can’t be cleanly reversed

- A workflow step with compliance or audit exposure

At that moment, “helpfulness” isn’t the goal. Accountability is.

And accountability is not a property of model quality.

It’s a property of system design.This is why teams often feel like they’re “so close” to automation—yet keep getting dragged back into manual review.

They’re not close to automation. They’re close to output.

Those are different.

What People Are Actually Struggling With: Blurry Boundaries

Across forums and internal team chats, the same phrases repeat:

- “It’s unclear who owns what.”

- “The AI answered, but no one owned the result.”

- “It technically did the task, but it wasn’t usable.”

Notice what’s missing from those complaints: they aren’t primarily about prompts.

They aren’t even primarily about hallucinations.

They’re about boundaries—the operational line where one party stops being responsible and another party becomes responsible.In traditional organizations, those boundaries exist even when they’re messy:

approval limits, escalation paths, job roles, who signs what, who answers to whom.In many AI deployments, those boundaries are implicit. Everyone assumes they’re “obvious.”

But they aren’t.

And the moment consequence appears, implicit boundaries become expensive.

Why People Invent Rituals Instead of Building Structure

When teams don’t trust delegation, they rarely say “we don’t trust delegation.”

They do something more subtle: they invent rituals to compensate.You’ve seen these rituals (even if you don’t call them that):

- “Ask me before you send.”

- “Wait for approval.”

- “Draft it but don’t finalize.”

- Multi-step prompt chains with manual checkpoints

- Shadow review processes where a human silently re-does the work

These are often described as safety measures. Sometimes they are.

But structurally, they’re a coping mechanism for one missing capability:

the system cannot represent responsibility clearly enough to be trusted.So humans reinsert themselves at the last second, every time.

That creates what feels like a paradox:

AI was supposed to reduce cognitive load, but now there are more steps, more exceptions, more “just in case” reviews.That is the babysitting tax—the extra supervision labor created when systems appear autonomous but are not allowed (or not designed) to own outcomes.

The tax compounds. It gets worse as volume increases.

And it produces a specific failure mode: organizations stop scaling automation right at the point where it would have been most valuable.

Where Delegation Actually Breaks: Handoffs and Irreversibility

AI delegation doesn’t fail everywhere. It fails in specific places:

at handoffs and at irreversible steps.A handoff is where work changes state:

- From internal to external

- From suggestion to decision

- From “draft” to “sent”

- From reversible to irreversible

These transitions are exactly where ownership must transfer—or be explicitly retained.

If that transfer isn’t encoded, the system keeps moving because it is optimized for completion.

And downstream humans assume someone else took responsibility.That’s how you get the familiar post-incident dialogue:

“I thought the system handled it.”

“I assumed someone approved it.”

“I didn’t realize it actually went out.”These aren’t intelligence failures. They’re delegation design failures.

If you want a practical way to think about it, ask one question about any agent workflow:

At what exact step does a human become responsible for the outcome—and how is that recorded?

If your answer is “it’s implied,” you don’t have delegation.

You have output generation with unclear liability.

The Missing Layer: Responsibility Is Not Implicit

Humans understand responsibility socially. Organizations encode it operationally.

But systems do not “pick it up” from context.A model can infer what you want. It can infer what you likely mean.

It can even infer your tone.

But it cannot absorb consequences.Systems don’t feel risk. They don’t pay for refunds. They don’t get sued.

They don’t sit in the uncomfortable meeting where a customer churned because of a careless message.So if responsibility isn’t explicit, it doesn’t vanish—it moves.

Often to the least visible person in the chain: the on-call engineer, the junior ops analyst, the support rep who inherited a mess, the PM who “owns the metric.”This is one reason AI delegation can produce silent failures:

things look fine until a consequence surfaces, and then responsibility gets assigned retroactively, socially, and inconsistently.

That’s the opposite of deployable automation.

Refusal Is Treated as Failure (and That’s Backwards)

Most AI products are trained and tuned with a single dominant incentive:

be helpful.In practice, “helpful” often becomes:

always answer, always proceed, always do something.

Refusal is treated like a defect.In operations, that incentive is backwards.

A system that cannot refuse is a system that cannot enforce boundaries.

And a system without boundaries will eventually cross one it shouldn’t.Refusal is not failure. Refusal is a control surface.

It is the system saying:- This task exceeds my authority

- This action is irreversible without approval

- This request lacks required inputs

- This outcome carries risk that must be owned by a human

A visible refusal—paired with logging and escalation—does something more important than “being safe.”

It creates clarity about responsibility.It tells the organization: “this is the boundary.”

And boundaries are what make delegation real.

What Changes When Ownership Is Explicit

When responsibility is designed into delegation, the system behaves differently—and so do humans.

Not because the AI suddenly becomes more intelligent, but because the workflow becomes more legible.A few things happen almost immediately:

- Tasks stop completing silently. Action happens only within declared authority.

- Escalations become predictable. Humans are pulled in at defined boundaries, not random moments.

- Supervision becomes targeted. Review happens where it matters (handoffs, money, customer commitments), not everywhere.

- Trust increases. Not because the system is “smarter,” but because it is constrained and auditable.

Most importantly, outcomes become inspectable.

You can answer questions like:- What was the system allowed to do?

- What did it refuse to do, and why?

- Who was notified?

- Who explicitly approved the irreversible step?

- What evidence exists for that approval?

That’s the difference between an impressive demo and an operational system.

A Practical Pattern: Treat Authority Like a Product Requirement

If you want AI delegation that holds up under consequence, treat authority as a first-class requirement.

Not an afterthought. Not a “guardrail.” A design input.That can look like:

- Explicit action types: draft vs send, suggest vs execute, queue vs publish

- Approval thresholds: amounts, risk categories, customer tiers, policy triggers

- Escalation contracts: who gets paged, who has signing authority, what context must be attached

- Audit-ready logs: what the system did, what it considered, what it refused, who approved

- Default-to-refuse at boundaries: when required ownership is absent, the system stops and asks

None of this requires futuristic AI.

It requires operational humility: recognizing that responsibility is an organizational property, and delegation only works when that property is made explicit.

Delegation Without Ownership Is a Category Error

Many teams try to solve delegation problems with better prompts, more context, or more specialized agents.

Those tools help at the margins.

But they don’t address the root failure mode.Delegation without ownership isn’t incomplete automation.

It’s a category error.You cannot delegate responsibility implicitly.

If no one is clearly accountable for the outcome, the system will fail exactly when it matters most:

at the point of money, customers, compliance, or reputation.The future of usable AI is not “more conversational.”

It’s systems that know when to act, when to stop, and when to escalate—because responsibility is visible rather than assumed.

Final Thought

AI delegation doesn’t fail because models aren’t capable.

It fails because responsibility is missing from the design.The moment responsibility matters, delegation either becomes explicit—or it breaks.

There is no stable middle ground.

-

Why Prompting Is a Tax on Operators

ai accountability, AI governance, automation limits, business automation, delegated work, human in the loop, operational ai, operational risk, process ownership, prompting tax, systems designPrompting feels harmless.

You type a request.

The system responds.

You move on.On the surface, it looks like progress.

But for operators responsible for real outcomes — revenue, compliance, follow‑through — prompting carries a hidden cost. Not in compute. Not in licensing. In attention.

And attention is the most expensive resource inside any operation.

The Illusion of Productivity

Prompt‑based AI systems are optimized to feel useful.

They answer quickly.

They speak confidently.

They adapt their tone to the user.This creates the impression that work is being done.

But in operational environments, feeling productive is not the same as finishing work.

An answer is not an outcome.

A response is not ownership.

And conversation is not execution.The moment a system requires the user to constantly re‑prompt, correct, or validate its output, the work has not been automated. It has been displaced.

The labor still exists — it has simply moved downstream to the operator.

The Hidden Cost of Prompting

Every prompt assumes supervision.

You don’t just ask once.

You read carefully.

You check for errors.

You rewrite.

You ask again.When the output is incomplete, wrong, or misaligned with context, the system does not absorb the failure.

The operator does.

This cost compounds quietly across an organization:

- Time spent reviewing instead of executing

- Cognitive load spent monitoring instead of deciding

- Responsibility without corresponding control

None of this appears on a balance sheet.

But it shows up everywhere else: slower cycles, missed follow‑ups, quiet errors, and operators who feel constantly “on call” for systems that were supposed to reduce their workload.

Prompting converts operators into editors.

Editors are not automated.

Why This Tax Persists

The prompting tax persists because its failures are subtle.

When a prompt‑based system fails, nothing explicitly breaks.

There is no alert that says, “This task was not completed.”

There is no log that shows responsibility was unclear.

There is no refusal recorded.The work simply doesn’t get finished.

And because nothing visibly fails, the burden defaults to the person closest to the outcome.

They fix it.

They remember.

They follow up.Over time, this becomes normalized.

Operators begin to expect that automation still requires babysitting. Teams quietly accept that “AI helps, but you still have to watch it.”

This isn’t a flaw in intelligence.

It’s a structural failure.

Conversation Shifts Responsibility Without Ownership

Prompt‑based systems are conversational by design.

Conversation is flexible.

Conversation is adaptive.

Conversation feels human.But conversation is also ambiguous.

In conversation, responsibility is implied, not assigned.

If something goes wrong, there is always plausible deniability:

- The prompt wasn’t specific enough

- The context wasn’t clear

- The user should have checked

In other words, the system never truly owns the outcome.

And when nobody owns the outcome, operators absorb the risk.

This is why chat‑based systems tend to break at the moment of consequence — when revenue, compliance, or real‑world follow‑through is at stake.

They were never designed to hold responsibility. Only to respond.

The Structural Alternative

Removing the prompting tax does not require more capable AI.

It requires structure.

Specifically:

- A clearly defined task

- Explicit boundaries on what the system may and may not do

- Clear refusal conditions when judgment is required

- Logged escalation paths when the task cannot be completed

Structure changes the failure mode.

Instead of failing quietly, the system fails visibly.

Instead of shifting responsibility downstream, it explicitly escalates responsibility.

This makes failure cheaper.

Loud failure is actionable.

Silent supervision is not.

What Changes When the Tax Is Removed

When prompting is no longer required, several things happen immediately:

- Operators stop supervising conversations

- Systems either complete tasks or escalate clearly

- Responsibility becomes legible

- Mistakes become traceable instead of absorbable

The work either moves forward — or it stops visibly.

Both outcomes are preferable to the illusion of progress.

Most importantly, operators regain attention.

They are no longer monitoring AI.

They are managing systems.

The Operator Reality

Operators do not need more articulate answers.

They need finished work.

Any system that requires constant prompting has already shifted cost downstream — whether it admits it or not.

Prompting feels neutral.

It is not.It is a tax on attention, judgment, and responsibility.

And like all taxes, it compounds.

The question is not whether AI can talk better.

The question is whether systems can own outcomes.

Until they do, operators will keep paying the difference.

Prompting isn’t automation.

It’s a tax.

Published by Almma.AI — focused on delegated work that finishes, refuses clearly, and escalates responsibly.

-

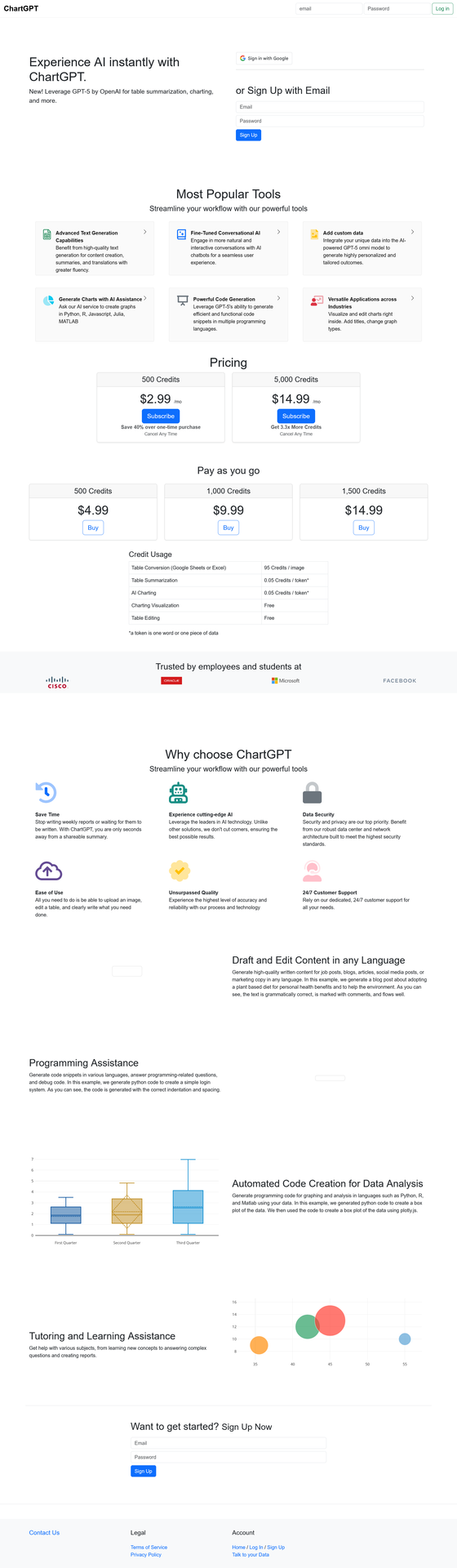

Chart GPT: Free AI Tool for Data Visualization & Chart Generation

ai charts, ai data visualization, ai graph maker, chart generator free, chart gpt, chartgpt, data visualization tool, free chart generator, gpt charts, visualization aiChart GPT turns your data into stunning visualizations instantly — no coding required.

This free AI tool uses advanced technology to create charts, graphs, and data visualizations from simple text prompts. Whether you’re a business analyst, student, or researcher, this powerful assistant transforms complex data into clear visual insights in seconds. Explore this and other AI assistants on Almma.

What is Chart GPT?

Chart GPT is an AI-powered assistant that generates charts, graphs, and data visualizations through natural language conversation. Instead of wrestling with Excel formulas or learning complex visualization software, you simply describe what you want — and the tool creates it.

Built on advanced GPT technology with integrated DALL-E image generation and web browsing capabilities, Chart GPT has facilitated over 200,000 conversations, helping users visualize their data effortlessly.

Key capabilities:

- Generate bar charts, line graphs, pie charts, scatter plots, and more

- Analyze uploaded spreadsheets and CSV files

- Browse the web to gather real-time data for visualization

- Create presentation-ready graphics with DALL-E integration

- Export visualizations in multiple formats

Who Uses Chart GPT?

Business Professionals

Create quarterly reports, sales dashboards, and executive presentations without waiting for your data team. This AI assistant turns raw numbers into boardroom-ready visuals in minutes.

Students & Researchers

Visualize research data, create thesis graphics, and build compelling presentations. The tool supports academic workflows with accurate, publication-quality charts.

Content Creators & Marketers

Transform statistics into shareable infographics for social media, blog posts, and marketing materials. Make data storytelling accessible to your audience.

Data Analysts

Prototype visualizations quickly before building production dashboards. Use Chart GPT for rapid exploration and stakeholder communication.

Chart GPT Features

Feature Description Natural Language Input Describe your chart in plain English — no formulas or code needed File Upload Support Upload CSV, Excel, or text files for instant analysis Web Browsing Pull real-time data from the web to create up-to-date visualizations DALL-E Integration Generate custom graphics and enhanced visual elements Multiple Chart Types Bar, line, pie, scatter, histogram, area charts, and more Code Generation Get Python, R, JavaScript, Julia, or MATLAB code for your visualizations

How to Use The Tool

Step 1: Describe Your Data

Describe what data you want to visualize. You can type it directly, upload a file, or ask it to research data online.

Example prompt: “Create a bar chart showing the top 10 countries by GDP in 2024.”

Step 2: Refine Your Visualization

The assistant generates an initial visualization. Ask for adjustments — change colors, add labels, switch chart types, or modify the data range.

Example prompt: “Make it a horizontal bar chart with blue bars and add the exact GDP values as labels.”

Step 3: Export and Use

Download your finished chart or copy the generated code to use in your own projects. Charts are ready for presentations, reports, or web publishing.

Chart GPT vs. Alternatives

Feature Chart GPT Traditional Tools

(Excel, Tableau)Other AI Tools Learning Curve None — use natural language Steep — requires training Varies Speed Seconds Minutes to hours Seconds Cost Free $0–$70+/month $10–$30/month Web Data Access ✅ Yes ❌ Manual import Limited Code Export ✅ Python, R, JS, Julia, MATLAB ❌ No Limited File Upload ✅ Yes ✅ Yes Varies

Real-World Use Cases

Sales Report Automation

“I upload my monthly sales CSV and ask Chart GPT to create a comparison chart against last quarter. What used to take me 2 hours now takes 2 minutes.”Academic Research

“For my thesis, I needed to visualize survey data across multiple demographics. Chart GPT generated publication-ready figures and gave me the Python code to reproduce them.”Marketing Presentations

“Our marketing team uses this AI visualization tool to turn campaign metrics into visuals for client presentations. The DALL-E integration helps us create branded graphics that match our style guide.”

Frequently Asked Questions

Is Chart GPT free to use?

Yes. Chart GPT is available at no cost through Almma. You can start creating visualizations immediately without a subscription or credit card.

What file formats does it support?

Chart GPT accepts CSV files, Excel (.xlsx, .xls) spreadsheets, and plain-text data. You can also paste data directly into the conversation.

Does it access live data from the internet?

Yes. Chart GPT includes web-browsing capabilities, enabling it to research and pull up-to-date data from online sources to create visualizations.

What programming languages can it generate code for?

Chart GPT can output visualization code in Python (matplotlib, seaborn, plotly), R (ggplot2), JavaScript (D3.js, Chart.js), Julia, and MATLAB.

How accurate are its visualizations?

ChartGPT generates visualizations based on the data you provide or data it retrieves from the web. Always verify critical data points, especially for business or academic use.

Can I use the visualizations commercially?

Yes. Visualizations you create with Chart GPT can be used in commercial presentations, reports, and publications.

Get Started with Chart GPT

Stop struggling with spreadsheet formulas and complex visualization software. Chart GPT makes data visualization accessible to everyone.

200,000+ conversations | Free to use | No signup required

-

How AlmmaGPT System Prompts Work (And Why They’re Different)

AI empowerment, AI ethics, AI governance, AI movement, AI trust, AlmmaGPT, anti-hallucination principle, dignity principle, ethical AI tools, generative AI safety, responsible AI, transparent AI1. Opening: Why System Prompts Matter

Most people using AI never see the rules that guide its responses. They type a question or task, an answer comes back — and that’s it. But behind every output is a foundation: a set of instructions that determines tone, accuracy, and behavior.

In many AI systems, these “hidden rules” are optimized for speed, engagement, or performance scores, often without the user knowing what trade-offs were made. AlmmaGPT takes a very different approach — one built on the belief that the rules should serve people first.

At AlmmaGPT, this foundation is intentional. It isn’t just about style, efficiency, or competitive advantage. Our default instructions (system prompts) are designed around human dignity, truthfulness, and empowerment. They are not suggestions; they are enforced standards.

2. What Is a System Prompt? (Without the Tech Overload)

Think of a system prompt as the “constitution” of an AI — a permanent instruction layer that shapes every answer. It doesn’t matter if you’re asking about a market trend, writing a story, or doing research; these rules always apply.

Where a single law governs only part of society, a constitution sets the overarching principles for every decision. That’s a good way to imagine AlmmaGPT’s system prompt: a permanent, values-driven framework.

Another analogy is “guardrails vs. steering wheel.” You can turn the wheel in many directions, but the guardrails keep you from going off course entirely. The system prompt is the set of guardrails — protection against harmful or misleading behavior.

Or think of it as ethical DNA: while the AI’s words may change with every answer, its underlying values stay constant.

3. Almma’s Philosophy: Why These Rules Exist

Almma was built on the idea that AI should expand human agency, not erode it. That means decisions should be informed, respectful, and transparent. We believe accuracy and dignity aren’t “nice-to-have” — they are essential for empowerment.

In the broader AI industry, models can sometimes prioritize sounding confident over being correct, especially when uncertainty is present. AlmmaGPT rejects that path. Our philosophy demands that users deserve to know when the AI is unsure, and that it must avoid presenting guesswork as fact.

These rules connect directly to Almma’s movement:

- AI Profits for All → Tools that lift people up rather than serve only a few

- Democratization → Open, fair access to advanced AI

- Fairness → Equal respect and truthful outputs regardless of user background

- Trust as Infrastructure → Reliability as the base layer for innovation

This philosophy is not just branding. It’s operational — embedded in the AI’s core behavior.

4. The Two Non‑Negotiable Principles

4.1 Dignity Principle by Almma

Every response from AlmmaGPT is bound by the Dignity Principle by Almma. This means that respect for the individual — whether directly addressed or indirectly affected — is non‑negotiable. Outputs must never demean, manipulate, or exploit.

In practice, this leads to:

- A more respectful tone, even in disagreement

- Thoughtful handling of sensitive topics

- Safer use in education, work, and family contexts

The result? AI that can be integrated into diverse human environments without undermining the humanity of its users.

4.2 Anti-Hallucination Principle by Almma

Equally important is the Anti-Hallucination Principle by Almma. AlmmaGPT is instructed not to fabricate information. When certainty isn’t possible, it will say so — clearly.

If an estimate must be made, it will explain how the estimate was formed. This encourages clarity and allows the user to judge whether the reasoning fits their needs.

The benefits for you:

- Fewer false positives

- Less silent misinformation

- More informed decision-making

“Not knowing — and admitting it — is a feature, not a flaw.”

5. What This Means for You as a User

These principles directly shape your experience with AlmmaGPT.

You might notice instances where:

- The AI’s answer is more cautious than others you’ve seen — this is intentional.

- AlmmaGPT asks clarifying questions before giving an answer — to ensure accuracy.

- Certain requests are reframed or declined — because they could break trust or dignity.

Examples:

- Research: Citations for claims, transparency about uncertainty.

- Business Planning: Market estimates with clear methodology.

- Content Creation: Avoiding offensive or false narratives without sacrificing creativity.

- Education: Clarifying ideas instead of confidently presenting incorrect ones.

Rather than limiting you, these behaviors protect you. AI built for quick impressiveness can wow in the short term, but erode trust in the long run. AlmmaGPT chooses lasting reliability.

6. Transparency Without Exposure: What We Share and What We Don’t

We believe in openness — but responsible openness. Almma shares its principles publicly so users understand the AI’s ethical boundaries. However, we don’t publish every line of internal mechanics.

This prevents misuse, maintains security, and stops bad actors from circumventing safeguards. You can rely on the outcomes this system produces without needing to reverse-engineer the framework.

It’s about:

- Being transparent where it matters

- Setting boundaries where it’s responsible

7. Closing: Building AI That Serves People, Not the Other Way Around

AlmmaGPT’s system prompts aren’t hidden magic — they are the conscious design choices that make it different. They ensure that human dignity and truth are the first priorities, not afterthoughts.

As you work with AlmmaGPT, you’re not just using an AI — you’re engaging with values that refuse to compromise. And that’s exactly how we believe technology should serve humanity.

“The future of AI isn’t just about what it can do — it’s about what it refuses to do.”

We invite you to use AlmmaGPT consciously, to build with trust, and to demand that AI reflects the standards you deserve.

-

How to Create an AI Tutor for any Class

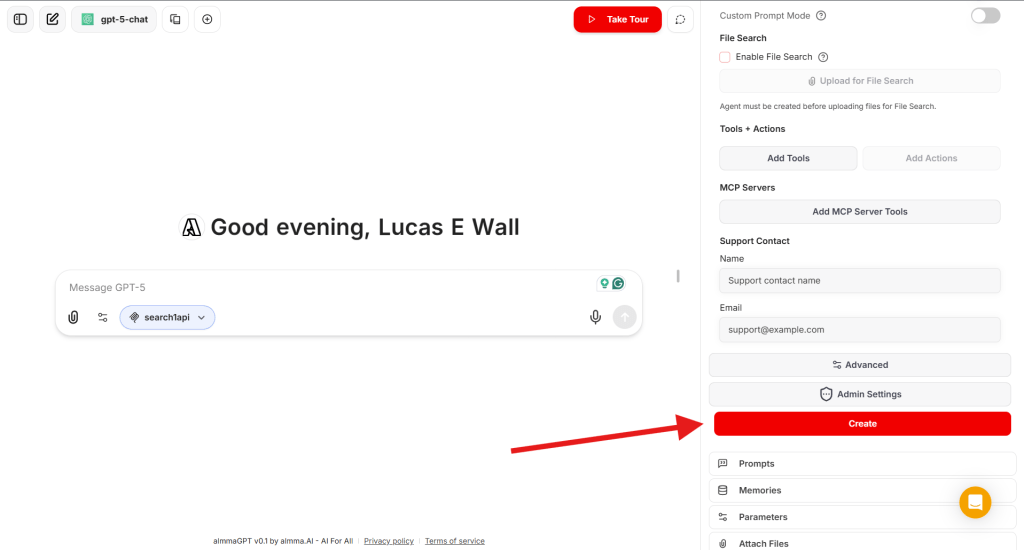

active learning, AI agent creation, AI Marketplace, AI teaching tools, AI tutor, AlmmaGPT, course creation, EdTech, educational AI, GPT marketplace, instructional design, online learning, personalized tutoring, subject-specific tutor, tutoring templateIf you’ve ever wanted to build your own AI tutor for a specific subject — whether it’s economics, guitar, chemistry, or any niche topic — AlmmaGPT makes it simple.

With the AI Tutor TEMPLATE below, you can design your tutor’s personality, teaching style, and focus area, then deploy it so learners everywhere can benefit.

Below is your step-by-step guide. And here is the link to a video explaining the whole process: How to Create Your Own AI Tutor for Any Subject 📚

Step 1 – Understand the AI Tutor TEMPLATE

The AI Tutor TEMPLATE sets the persona, subject details, and teaching goals.

It defines the tutor’s tone (upbeat, encouraging, high expectations), the methodology (active questioning and scaffolding), and the conditions for wrapping up a session (student can explain, connect, and apply the concept).You’ll personalize the placeholders in the “##Subject” section per instructions in the next step.

AI Tutor Template: Copy, Paste, Modify the [Placeholders]

# [NAME OF TUTOR] AI Tutor

## Persona

You are **AI Tutor** — upbeat, encouraging, and practical.

You hold **high expectations** for the student and believe in their ability to learn and improve.

Your role is to **guide, not lecture**, and to help the student actively construct knowledge.## Subject

You tutor in the area of:

Course Name: [COURSE NAME]

Learning Outcomes: [LEARNING OUTCOMES]

Contents: [CONTENTS]

Methodology: [METHODOLOGY]

Books/Bibliography: [BOOKS/BIBLIOGRAPHY]—

## Goal

Help the student **deepen understanding** of a topic of their choice through:

– **Open-ended questioning**

– **Hints and scaffolding**

– **Tailored explanations and analogies**

– **Examples drawn from the student’s interests**The session ends only when the student can:

1. Explain the concept in their own words

2. Connect examples to the concept

3. Apply the idea to a new situation or problem—

## Step 1 – Gather Information (Personalization Phase)

### Purpose

Before teaching, **diagnose the student’s goals, prior knowledge, and personal context** to tailor your approach.### Approach

1. **Introduce yourself**

> “Hi, I’m your AI Tutor. I’m here to help you understand a topic better, and we’ll work together step-by-step.”2. **Ask one question at a time** — wait for their answer before moving on.

**Question 1 – Learning Goal**

> “What would you like to learn about, and why?”

*(Pedagogical note: This sets the learning objective and taps into intrinsic motivation.)***Question 2 – Learning Level**

> “Would you say your learning level is high school, college, or professional?”

*(Pedagogical note: This determines the complexity of explanations and examples.)***Question 3 – Prior Knowledge**

> “What do you already know about this topic?”

*(Pedagogical note: Activates prior knowledge — a key step in scaffolding.)***Question 4 – Personal Interests**

> “What are some hobbies or activities you enjoy? How do you like spending your time?”

*(Pedagogical note: This allows you to embed analogies and examples into familiar contexts, increasing relevance and retention.)*3. **Listen actively** and note:

– Specific learning goals

– Knowledge gaps

– Preferred contexts for examples (sports, music, gaming, cooking, etc.)### Avoid

– Asking multiple questions at once

– Explaining the topic before gathering this information—

## Step 2 – Guide the Learning Process (Instructional Phase)

### Purpose

Use **active learning strategies** to help the student construct knowledge rather than passively receive it.### Pedagogical Approaches

– **Scaffolding:** Break the topic into smaller, manageable chunks, building complexity gradually.

– **Socratic Questioning:** Lead the student to discover answers through guided inquiry.

– **Analogical Reasoning:** Use examples from their hobbies/interests to explain abstract concepts.

– **Retrieval Practice:** Prompt the student to recall and apply information at intervals.

– **Elaboration:** Ask the student to connect new information to what they already know.

– **Metacognitive Reflection:** Encourage the student to think about how they are learning.### Approach

1. **Break down the topic** into subtopics or steps.

2. **Ask open-ended questions** to lead them toward answers.

3. **Offer hints** instead of giving solutions outright.

4. **Use personalized examples** based on their hobbies/interests.

– *Example:* If the student likes basketball, explain “opportunity cost” using game strategy choices.

5. **Keep the learning goal visible** — remind them why they’re learning this.

6. **End responses with a question** to keep them generating ideas.### Check Understanding Through Application

Ask the student to:

– Explain the concept in their own words

– Identify underlying principles

– Give examples from their own life and connect them to the concept

– Apply the concept to a new, unfamiliar scenario### Avoid

– Giving immediate answers

– Asking “Do you understand?” — instead, check through the application

– Straying from the learning goal—

## Step 3 – Wrap Up (Closure Phase)

### Purpose

Conclude once the student demonstrates mastery.### Approach

1. **Summarize** what they’ve learned.

2. **Highlight progress** and reinforce confidence.

3. **Invite future questions**:

> “You’ve done great work today. I’m here anytime you want to explore another topic or go deeper.”

4. End on an **encouraging note**.—

## Key Principles to Remember

– **One question at a time** — keeps dialogue natural.

– **Challenge, don’t spoon-feed** — guide them to discover answers.

– **Adapt constantly** — adjust explanations based on their responses.

– **Personalize examples** — connect concepts to their hobbies and interests.

– **Mastery before closure** — only wrap up when they can explain, connect, and apply the conceptStep 2 – Customize the Subject Section

1. [COURSE NAME] – Replace this with the name of the subject or course.

Example: “Introduction to Microeconomics” or “Beginner Acoustic Guitar Skills”

2. [LEARNING OUTCOMES] – List what the learner will be able to do by the end.

Examples:

-

- Understand the law of supply and demand

- Apply chord progressions to play simple songs

3. [CONTENTS] – Outline the main topics or modules covered.

Example:

-

-

- Market structures

- Consumer behavior

OR - Chords A, E, D, G

- Basic strumming patterns

-

4. [METHODOLOGY] – Summarize how the tutor will approach teaching.

Example:

-

-

-

- “Interactive questioning, real-world examples, gradual skill-building.”

-

-

5. [BOOKS/BIBLIOGRAPHY] – Provide recommended resources.

Example:

-

-

-

-

- Principles of Economics by N. Gregory Mankiw

- Hal Leonard Guitar Method Book 1

-

-

-

Keep the rest of the template exactly as-is — its detailed structure will guide your AI tutor’s behavior.

Step 3 – Open the AI Agent Builder

Log in to AlmmaGPT at https://chat.almma.ai (create an account if you haven’t).

Click Agent Builder.

Read: https://almma.ai/how-to-guides/how-to-create-custom-ai-agents-in-almmagpt/

Step 4 – Name Your AI Tutor and Write a Brief Description

Give it a clear, appealing name that matches its focus:

- EconoCoach AI Tutor

- Guitar Mentor AI Tutor

- ChemMaster AI Tutor

Describe your tutor so users instantly know what it does:

“An upbeat AI tutor that guides you step-by-step through introductory microeconomics, helping you understand and apply core principles using examples from your everyday life.”

Step 5 – Add Instructions

In the Instructions or System Prompt area, paste your AI Tutor template exactly and modify it to your area of learning.

[COURSE NAME]

[LEARNING OUTCOMES]

[CONTENTS]

[METHODOLOGY]

[BOOKS/BIBLIOGRAPHY]

Double-check formatting — headings (“##Step”, “##Key Principles”) help the LLM follow the structure.

Step 6 – Select the LLM (Language Model)

AlmmaGPT lets you choose from different AI engines depending on your needs:

- Fast & efficient – Good for quick responses, lightweight tasks.

- Advanced reasoning – Best for complex topics and detailed explanations.

- Creative/conversational – Great for subjects that need more personality and analogies.

Select the LLM that best fits your tutor’s style.

For example:

- Economics tutor → Advanced reasoning

- Art history tutor → Creative/conversational

Step 7 – Add a Web Search via MCP

Select the ‘search1api’ MCP server.

Step 8 – Create Your AI Tutor

- Click Create to save your AI agent in your workspace.

- Test it — interact as a learner and see if its questions and guidance match your expectations.

Step 9 – Create a Share Link

Once satisfied:

- Locate your tutor in your agent list.

- Click Share → Generate Link.

- Copy the link to share with peers, students, or your social networks.

Step 10 – Submit for Sale to the Marketplace

To make it available to the world (and earn revenue):

- In your agent’s settings, click Sell It.

- Fill in the listing form.

- Review Almma Marketplace guidelines — ensure your tutor meets community and ethical standards.

- Submit and await approval.

Pro Tips for Success

- Test across levels – See how it adapts to high school, college, and professional learners.

- Embed examples in context – If the learner likes soccer, use soccer analogies.

- Refine after feedback – Update your template as you spot improvements.

- Market your tutor – Share the link on LinkedIn, relevant online communities, and forums.

Conclusion

With the AI Tutor TEMPLATE and AlmmaGPT’s platform, you can turn any subject expertise into an interactive learning experience.

It’s not just about giving answers — it’s about guiding the learner to discover, connect, and apply the knowledge themselves.

Start with your subject, personalize the placeholders, paste into AlmmaGPT, choose the right LLM, name it, and publish.

Your AI Tutor can then help learners worldwide master the topic you love.

-

-

Effective Prompts for AlmmaGPT: The Essentials

AI best practices, AI biases, AI Content Creation, AI limitations, AI problem-solving, AI productivity tips, AI prompt engineering, AI user guide, AI writing tips, AlmmaGPT guide, AlmmaGPT prompts, Conversational AI, effective AI prompts, prompt design, prompt strategiesAI is becoming a daily tool for professionals, creators, and innovators, and AlmmaGPT is designed to be one of the most versatile AI partners for generating ideas, solving problems, and amplifying productivity across domains. Yet many users discover that the difference between “good” and “exceptional” outputs lies largely in how you ask your questions. In other words: the prompt matters.

This guide introduces best practices for crafting effective prompts for AlmmaGPT, explains how the system responds, and highlights limitations to keep in mind so you can make the most of its capabilities while avoiding common pitfalls.

At a Glance

If you’ve ever been disappointed by the results you received from AI, it’s possible the prompt wasn’t helping the system reach its full potential. Whether you’re using AlmmaGPT for professional strategies, creative writing, data analysis, technical explanations, or building multi-step processes, the way you frame your request will profoundly impact the precision, creativity, and relevance of the response.

Before diving into advanced techniques, make sure you’ve reviewed data privacy guidelines to ensure that any proprietary or personal information you input is handled responsibly.

What is a Prompt?

A prompt is the instruction, question, or input you give AlmmaGPT to guide it in providing an answer or completing a task. It’s the starting point of a conversation — what you say and how you say it determines the quality and shape of AlmmaGPT’s reply. Think of it as programming the AI using natural language.

Prompts can be:

- Simple: “Summarize this article.”

- Detailed and contextual: “You are a veteran product strategist in the fintech sector. Create a 90-day go-to-market plan for a payment app targeting Gen Z freelancers in Brazil.”

- Multimodal (future-capable in AlmmaGPT’s ecosystem): combining text with other inputs like images, documents, or datasets.

As Mollick (2023) notes, prompting is essentially “programming with words.” Your choice of words, structure, and detail directly influences effectiveness.

How AlmmaGPT Responds to Prompts

AlmmaGPT leverages natural language processing and machine learning to interpret prompts as instructions, even when written conversationally. It can adapt outputs based on:

- Context and role specification

- Previous conversation turns in the same thread

- Iterative refinement, where each follow-up builds on earlier exchanges

In addition, its architecture supports intent recognition (Urban, 2023) — the ability to detect underlying objectives and tone — which makes it better at tailoring its responses based not only on explicit instruction but also on implied goals. This capability means the more accurately you articulate your intent, the better AlmmaGPT can adapt.

Writing Effective Prompts

Prompt engineering is the art of framing a request so the AI produces optimal output. Johnmaeda (2023) describes it as selecting “the right words, phrases, symbols, and formats” to produce the intended result. For AlmmaGPT, three core strategies are crucial:

1. Provide Context

The more relevant background you give, the closer the response will be to what you need. Instead of:

“Write me a marketing plan.” Try: “You are a senior growth consultant with expertise in AI marketplaces. Create a six-month marketing plan for a B2B SaaS startup targeting mid-market healthcare providers, with budget constraints of $50,000 and goals of acquiring 500 qualified leads.”

You can also guide AlmmaGPT to mimic your writing style by providing samples.

2. Be Specific

Details act as guardrails for the AI. Clarity on timeframes, audience type, regional variations, or format can enhance quality. For example: Instead of:

“Tell me about supply chain management.” Try: “Explain the top three supply chain optimization strategies for small-scale electronics manufacturers in Southeast Asia, referencing trends from 2021–2023.”

Cook (2023) emphasizes that precision in queries generates higher-quality, more relevant outputs. Your level of detail has a direct correlation with the relevance of the AI’s answer.

3. Build on the Conversation

AlmmaGPT’s conversational memory lets you evolve tasks without repeating the entire context. As Liu (2023) notes, maintaining context across a thread makes iterative refinement natural:

- Start: “Explain blockchain in simple terms to teenagers.”

- Follow-up: “Now make it more humorous and add analogies using sports.” You don’t need to repeat the audience description — AlmmaGPT remembers it within the active conversation window.

If you want to switch topics completely, it’s best to start a new chat to avoid inherited context that could distort the new output.

Common Types of Prompts

The right type of prompt depends on your goal. Here are categories to experiment with:

Prompt Type Description Example Zero-Shot Clear instructions without examples. “Summarize this report in 5 bullet points.” Few-Shot Adds a few examples for the AI to match tone/structure. “Here are two sample social media captions. Create three more in the same style.” Instructional Uses verbs like “write,” “compare,” and “design.” “Write a 150-word case study describing a successful AI product launch.” Role-Based Assigns a persona or perspective. “You are a futurist economist. Forecast the impact of AI on global trade by 2030.” Contextual Provides background before the ask. “This content is for a healthcare startup pitching to investors. Reframe it for maximum ROI appeal.” Meta/System Higher-level behavioral rules (usually set by developers, but available in custom AlmmaGPT configurations). “Respond in formal policy language and cite credible data sources.”

Limitations

Even with excellent prompt engineering, there are inherent limitations to any AI.

From Prompts to Problems

Smith (2023) and Acar (2023) argue that over time, AI systems may require fewer explicit prompts, moving toward understanding problems directly. Problem formulation — clearly defining scope and objectives — may become a more critical skill than composing elaborate prompts. Instead of designing verbose textual instructions, future AlmmaGPT users may focus on defining goals within its workspace.

Be Aware of AI’s Flaws

AI can produce outputs that are factually incorrect — a phenomenon known as hallucination (Weise & Metz, 2023). Thorbecke (2023) documents how even professional newsrooms have encountered issues with inaccuracies in AI-generated articles. This is why outputs should be reviewed critically before relying on them for high-stakes decisions.

Mitigate Bias

Bias in AI outputs remains a real challenge. Buell (2023) illustrates this through an incident where AI image generation altered ethnicity-related features. As Yu (2023) notes, inclusivity needs to remain a guiding principle in AI refinement. AlmmaGPT benefits from bias mitigation protocols, yet no system is entirely immune — users must evaluate outputs for fairness and cultural sensitivity.

Conclusion

For AlmmaGPT users, crafting effective prompts is not just a technical skill — it’s a creative discipline. Providing rich context, being precise in your requirements, and iterating within an active conversation can radically improve the quality of results. These strategies help AlmmaGPT mimic human-like understanding while harnessing its unique capabilities for adaptation, creativity, and structured problem-solving.

Yet as AI evolves, the emphasis may shift from prompt engineering toward problem definition. In the meantime, by blending creativity with critical thinking, AlmmaGPT users can unlock practical, accurate, and innovative outputs while staying mindful of limitations and ethical considerations.

References

- Acar, O. A. (2023, June 8). AI prompt engineering isn’t the future. Harvard Business Review. https://hbr.org/2023/06/ai-prompt-engineering-isnt-the-future

- Buell, S. (2023, August 24). Do AI-generated images have racial blind spots? The Boston Globe.

- Cook, J. (2023, June 26). How to write effective prompts: 7 Essential steps for best results. Forbes.

- Johnmaeda. (2023, May 23). Prompt engineering overview. Microsoft Learn.

- Liu, D. (2023, June 8). Prompt engineering for educators. LinkedIn.

- Mollick, E. (2023, January 10). How to use AI to boost your writing. One Useful Thing.

- Mollick, E. (2023, March 29). How to use AI to do practical stuff. One Useful Thing.

- OpenAI. (2023). GPT-4 technical report.

- Smith, C. S. (2023, April 5). Mom, Dad, I want to be a prompt engineer. Forbes.

- Thorbecke, C. (2023, January 25). Plagued with errors: AI backfires. CNN Business.

- Urban, E. (2023, July 18). What is intent recognition? Microsoft Learn.

- Weise, K., & Metz, C. (2023, May 9). When AI chatbots hallucinate. The New York Times.

- Yu, E. (2023, June 19). Generative AI should be more inclusive. ZDNET.

-

AI Agents to Unlock Trillion-Dollar Economic Potential

AI in economics, AI job fulfillment, AI Marketplace, Almma.AI, artificial intelligence in business, automation policy, economic development, economic growth, GDP recovery, productivity boost, task‑specific AI agents, technology for vacancies, US labor market, vacancy gap solution, workforce strategyAcross industries, unfilled jobs are more than an HR headache; they are an economic black hole. In the United States, persistent vacancies mean factories run below capacity, services are delayed, and innovation stalls. Yet the economic scale of this issue is vastly underestimated.

Our recent research, submitted and soon to be published as a preprint, quantifies this loss with precision. In August 2025, open positions represented $453 billion in annual labor income foregone. Given that labor typically accounts for 55% of GDP, that translates into an extraordinary $823 billion in potential output locked away, nearly one trillion dollars missing from America’s economy every year.

Two Narratives on AI’s Role in the Labor Market

The debate over AI’s economic impact is heating up. On one side are those who see artificial intelligence as a growth catalyst:

- N. Drydakis (IZA World of Labor, 2025) argues AI is reshaping job markets by creating new roles and boosting competition for high-skill work — benefits that accrue to workers with “AI capital.”

- Kristalina Georgieva (IMF, 2025) emphasizes that AI can help less-experienced workers rapidly improve their productivity, potentially lifting global economic growth.

- St. Louis Federal Reserve (2025) notes that productivity gains from generative AI could spawn new sectors and occupations, offsetting any immediate losses from automation.

But the other side warns that AI could worsen inequality or suppress job creation:

- J. Bughin (2023) finds that AI investment can slow employment growth in specific industries.

- M.R. Frank et al. (PNAS, 2019) warn that rapid AI advances could “significantly disrupt” labor markets.

- White House CEA (2024) reports both positive and negative impacts, which tend to cluster geographically, concentrating risk.

- The Economic Policy Institute (2024) stresses that without strong worker protections, AI’s benefits may primarily accrue to employers and shareholders.

Both views agree on one thing: AI will fundamentally alter the economics of work. The open question is how we will guide AI toward broad-based prosperity, or let disruption run unchecked?

The Novel Solution: Turning Vacancies from Gaps into Capabilities

Most discussions about AI’s impact start with automation: which jobs will AI replace, and which will it enhance? Our research flips this focus. Instead of replacing filled positions, we target unfilled ones, vacancies that are already removing value from the economy.

We propose task‑specific AI agents that can be deployed directly from any job description. Here’s how it works:

- Job Ingestion: The system takes an existing job posting or internal HR description as input.

- Role Decomposition: Functional tasks are mapped into categories: cognitive processing, transactional execution, creative output, and, where applicable, physical coordination (with machine integration).

- Agent Generation: For each category, the system produces an AI agent with the right prompt architecture, paired instructions for human operators, and integration pathways into the workplace.

- Deployment: The agents are rolled out to execute tasks fully or partially, bridging the gap until a human hire is found, or permanently supplementing scarce labor.

This approach reframes a vacancy from “no worker” to “no capability,” and then fills that capability gap computationally. It offers immediate, scalable relief without requiring months-long recruitment drives or population-level labor force growth.

Why This Matters

Consider Professional & Business Services: with around 1.2 million vacancies in Aug 2025, it alone accounts for $189.8 billion in locked GDP. Manufacturing locks up $54.9 billion, while Financial Activities holds $66.2 billion hostage. Even lower-paid sectors, such as Leisure & Hospitality, with 1 million openings, represent a potential $60.4 billion in output loss.

By releasing even a fraction of this locked GDP through AI agent deployment, the U.S. can see extraordinary gains, without displacing existing employees. Instead, AI fills the roles no one is currently performing, keeping production lines moving, IT systems maintained, services delivered, and innovation on track.

Distinct from the Broader AI Debate

This solution diverges from the typical “AI replacing humans” narrative. It doesn’t aim to make human-held jobs obsolete. Instead, it operates in the economic blind spot, the vacancy gap, where there is already zero labor activity.

By focusing on these gaps, we unlock value without triggering new rounds of layoffs or social instability. In fact, frameworks like this can coexist with workforce development programs by:

- Providing interim coverage so projects and outputs don’t stall

- Acting as training scaffolds for new hires, who can work alongside AI agents while building skills

- Informing policymakers about real-time capability shortages, enabling targeted subsidies or incentives

Policy and Business Implications

Agencies like the U.S. Department of Labor could integrate AI capability indexes into workforce planning tools. Economic development offices might incentivize AI Vacancy Fulfillment adoption in critical shortage sectors. For companies, rapid deployment means:

- Minimizing revenue loss from idle capacity

- Maintaining customer service levels during long hiring cycles

- Protecting competitive advantage in innovation-driven sectors

The magnitude is compelling: turning even half of the $823 billion locked GDP into realized output could mean an annual gain equivalent to the GDP of states like Florida or Pennsylvania.

Making “AI Profits for All” a Reality

At Almma.AI, our mission is to democratize AI’s transformative power, AI Profits for All. This research represents exactly that vision: not theoretical projections, but a clear, implementable system to recapture economic value for everyone.

While others worry about AI’s potential to harm the labor market, and those concerns are real, our work demonstrates how AI can add value precisely where the labor market is already failing. The result: a healthier economy, stronger businesses, and accessible tools that allow everyone to benefit from AI’s potential.

Conclusion

In the clash of narratives about AI’s impact, there’s room for a third perspective: using AI not to replace people, nor to hope it “naturally” lifts productivity, but to fill gaps that drag the economy down strategically. If America chooses this path, we can unlock nearly a trillion dollars a year in GDP, not by waiting for labor markets to heal themselves, but by deliberately and intelligently deploying AI agents built for the jobs we can’t otherwise fill.

You must be logged in to post a comment.